[Newsmaker] 'Anti-Nth Room' legislation, an unfulfilled promise?

Platforms required to aggressively police some content, but measures not able to prevent another ‘Cyber Hell’ from opening, experts say

By Yoon Min-sikPublished : July 11, 2022 - 10:04

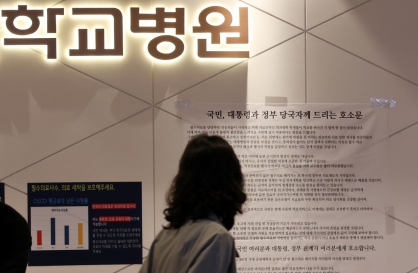

In late 2019, South Korea was shocked by an online sex crime ring that blackmailed over 70 young women, many of them minors, into filming explicitly sexual content to be sold for profit on Telegram chat rooms.

The ensuing public outrage resulted in a series of legal changes, collectively known as the “anti-Nth Room” legislation.

The ensuing public outrage resulted in a series of legal changes, collectively known as the “anti-Nth Room” legislation.

The case, widely referred to as “Nth Room” after one of the chat rooms where the illicit content was shared and men paid to join, is the subject material for Netflix’s true-crime documentary “Cyber Hell.”

The legislation, most of which passed the National Assembly in the summer of 2020 and went into effect in December 2021, introduced tougher penalties for online sex offenders as well as those who possess, purchase or distribute illicit sexual content.

Another core change was that providers of internet services and cloud storage services are now obliged to police their platforms to prevent and stop the spread of such content.

New rules in place

Late last month, the Korea Communications Commission revealed a report that offered a look at how businesses were complying with requirements to police content more strictly.

According to the report, a total of 87 providers say they have deleted 27,575 posts that were flagged for containing illicit videos throughout 2021.

Among the businesses are local behemoths Naver and Kakao, as well as multinational companies Google, Twitter and Facebook parent company Meta. But they do not include Telegram, the messenger service used for the crime that prompted the legislation. Nearly 66 percent of deletions were by Google, mostly in its search function and on YouTube.

The KCC’s report did not include the outcome of their preventative measures, another core responsibility required by the law, as many spent the last year preparing a filtering mechanism.

Another report, also released late last month, offers a look at how things are from a different angle.

That report is based on the work of an 801-strong citizens group in Seoul who monitored 35 online platforms for four months from July through October last year.

According to them, 16,455 posts were reported to contain potentially illicit sexual content. Of them, 34 percent, or 5,584, were censored in some way by the platform operator. Some 3,047 were removed, while the rest were met with temporary withdrawals of the posts in question or temporary freezes on the account.

The posts left intact were either judged by the platforms to be not illicit or it was not clear that the material broke the law. The platforms seem to be judging cases more strictly: In 2019 a similar survey found only 22.8 percent of reported content was taken offline.

In nearly half of the cases, it took more than a week for a flagged post to be taken down or suspended. Only 20 percent of the cases that were removed or sanctioned were handled within 24 hours.

Nearly two-thirds of the total 16,455 flagged items stayed intact during the four-month monitoring period.

On Tuesday, the Supreme Prosecutors’ Office said the country caught a total of 17,495 digital sex offenders last year, though only 28 percent of them were indicted.

Lingering questions

One of the “anti-Nth Room” laws is the revised Telecommunications Business Act that gives operators of related services the right and the obligation to check images, videos or files for inappropriate content before they are transmitted. It covers a vast spectrum of cyberspace, including open and group chat rooms, but not one-on-one private chat rooms.

Platform operators spent last year devising and experimenting with a filtering mechanism based on artificial intelligence. But the results have been unreliable.

Some of the videos that were censored included photos of a model in a bikini and videos of female YouTubers that were not filmed illegally, sparking further complaints.

“It possibly violates the basic rights guaranteed by Articles 18 (privacy of correspondence) and 21 (freedom of speech and the press) of the Constitutional Law, and could violate Article 3 of the Protection of Communications Secrets Act (protection of secrets of communication and conversation),” professor Lee Jun-bok of Seokyeong University wrote in a paper published in the Journal of Police Science by the Korean National Police University.

Lee pointed out concerns that forcing social media operators or mobile messengers to apply technical procedures to filter out inappropriate content is an excessive regulation.

He also pointed out the worry that a presidential decree allowing certain law enforcement officials to go undercover when investigating online sex exploitation schemes could be used by the government to monitor civilians and infringe on their privacy.

The biggest criticism facing the anti-Nth Room laws, however, is not about the implications for citizens’ rights. It is that the measures are unable to achieve the stated purpose of preventing another Nth Room.

While the sexual exploitation incident occurred on the Telegram messenger, the laws only apply to operators located within South Korea.

Earlier this year, a “cat Nth room” on Telegram was discovered by local media. Those in the chat room shared photos and videos of cruelly abused cats. An open chat room functioned as a waiting room for those hoping to join, who were given links to the room after validating their credentials as animal abusers by uploading related photos.

The room was shut down after one of its operators -- a man in his late 20s -- was arrested by police, but the remaining members created another “cat Nth room” on Australia-based messenger Session. The operator was a man calling himself “jjubini” -- a reference to Cho Ju-bin, one of the two leading culprits behind the Nth chat room.

Reports about illegal content being shared online, mostly via services based abroad, keep surfacing despite the enactment of the Nth room laws, raising questions about their effectiveness.

Stronger measures needed?

The Incheon Digital Sexual Crimes Prevention Center recently held a debate on the Nth room and other sexual crimes, during which limitations on current policies and laws were discussed.

Kim So-ra, a lecturer from the department of sociology at Jeju National University, stressed the need for a way to deal with foreign-based websites that repeatedly post sexually exploitive content.

In 2018, the Korea Cyber Sexual Violence Response Center filed charges against 136 websites with such content, based on the testimonies of the victims whose images were used in the illegal content. Out of the 172 accused, charges against 93 were dropped.

Kim pointed out that 34 of the accused were not indicted because it was impossible to specify their exact identity and location, calling out the need for international cooperation in investigating such crimes.

Some legal experts have called for a clause allowing investigators forcible search and seizure of the accused, even without a warrant.

“The existing custom does not allow for an active search and seizure outside of the evidence voluntarily submitted by the accused,” said lawyer Baek So-yun of the GongGam Human Rights Law Foundation during the forum. She suggested allowing an exception for warrants to the Criminal Act when there is significant reason to suspect that the accused has committed a serious felony and there is a need for the immediate seizure of servers, hard drives or other storage devices.

Baek said that the current situation could lead to the victim of illegally filmed sexual content falling victim to a threat of or actual distribution of such content after reporting the case to the authorities. She said there needs to be a new clause in the Act on Special Cases Concerning the Punishment of Sexual Crimes that grants investigators the right to immediately order the deletion of sexually exploitive content upon securing the original files.

The legislation, most of which passed the National Assembly in the summer of 2020 and went into effect in December 2021, introduced tougher penalties for online sex offenders as well as those who possess, purchase or distribute illicit sexual content.

Another core change was that providers of internet services and cloud storage services are now obliged to police their platforms to prevent and stop the spread of such content.

New rules in place

Late last month, the Korea Communications Commission revealed a report that offered a look at how businesses were complying with requirements to police content more strictly.

According to the report, a total of 87 providers say they have deleted 27,575 posts that were flagged for containing illicit videos throughout 2021.

Among the businesses are local behemoths Naver and Kakao, as well as multinational companies Google, Twitter and Facebook parent company Meta. But they do not include Telegram, the messenger service used for the crime that prompted the legislation. Nearly 66 percent of deletions were by Google, mostly in its search function and on YouTube.

The KCC’s report did not include the outcome of their preventative measures, another core responsibility required by the law, as many spent the last year preparing a filtering mechanism.

Another report, also released late last month, offers a look at how things are from a different angle.

That report is based on the work of an 801-strong citizens group in Seoul who monitored 35 online platforms for four months from July through October last year.

According to them, 16,455 posts were reported to contain potentially illicit sexual content. Of them, 34 percent, or 5,584, were censored in some way by the platform operator. Some 3,047 were removed, while the rest were met with temporary withdrawals of the posts in question or temporary freezes on the account.

The posts left intact were either judged by the platforms to be not illicit or it was not clear that the material broke the law. The platforms seem to be judging cases more strictly: In 2019 a similar survey found only 22.8 percent of reported content was taken offline.

In nearly half of the cases, it took more than a week for a flagged post to be taken down or suspended. Only 20 percent of the cases that were removed or sanctioned were handled within 24 hours.

Nearly two-thirds of the total 16,455 flagged items stayed intact during the four-month monitoring period.

On Tuesday, the Supreme Prosecutors’ Office said the country caught a total of 17,495 digital sex offenders last year, though only 28 percent of them were indicted.

Lingering questions

One of the “anti-Nth Room” laws is the revised Telecommunications Business Act that gives operators of related services the right and the obligation to check images, videos or files for inappropriate content before they are transmitted. It covers a vast spectrum of cyberspace, including open and group chat rooms, but not one-on-one private chat rooms.

Platform operators spent last year devising and experimenting with a filtering mechanism based on artificial intelligence. But the results have been unreliable.

Some of the videos that were censored included photos of a model in a bikini and videos of female YouTubers that were not filmed illegally, sparking further complaints.

“It possibly violates the basic rights guaranteed by Articles 18 (privacy of correspondence) and 21 (freedom of speech and the press) of the Constitutional Law, and could violate Article 3 of the Protection of Communications Secrets Act (protection of secrets of communication and conversation),” professor Lee Jun-bok of Seokyeong University wrote in a paper published in the Journal of Police Science by the Korean National Police University.

Lee pointed out concerns that forcing social media operators or mobile messengers to apply technical procedures to filter out inappropriate content is an excessive regulation.

He also pointed out the worry that a presidential decree allowing certain law enforcement officials to go undercover when investigating online sex exploitation schemes could be used by the government to monitor civilians and infringe on their privacy.

The biggest criticism facing the anti-Nth Room laws, however, is not about the implications for citizens’ rights. It is that the measures are unable to achieve the stated purpose of preventing another Nth Room.

While the sexual exploitation incident occurred on the Telegram messenger, the laws only apply to operators located within South Korea.

Earlier this year, a “cat Nth room” on Telegram was discovered by local media. Those in the chat room shared photos and videos of cruelly abused cats. An open chat room functioned as a waiting room for those hoping to join, who were given links to the room after validating their credentials as animal abusers by uploading related photos.

The room was shut down after one of its operators -- a man in his late 20s -- was arrested by police, but the remaining members created another “cat Nth room” on Australia-based messenger Session. The operator was a man calling himself “jjubini” -- a reference to Cho Ju-bin, one of the two leading culprits behind the Nth chat room.

Reports about illegal content being shared online, mostly via services based abroad, keep surfacing despite the enactment of the Nth room laws, raising questions about their effectiveness.

Stronger measures needed?

The Incheon Digital Sexual Crimes Prevention Center recently held a debate on the Nth room and other sexual crimes, during which limitations on current policies and laws were discussed.

Kim So-ra, a lecturer from the department of sociology at Jeju National University, stressed the need for a way to deal with foreign-based websites that repeatedly post sexually exploitive content.

In 2018, the Korea Cyber Sexual Violence Response Center filed charges against 136 websites with such content, based on the testimonies of the victims whose images were used in the illegal content. Out of the 172 accused, charges against 93 were dropped.

Kim pointed out that 34 of the accused were not indicted because it was impossible to specify their exact identity and location, calling out the need for international cooperation in investigating such crimes.

Some legal experts have called for a clause allowing investigators forcible search and seizure of the accused, even without a warrant.

“The existing custom does not allow for an active search and seizure outside of the evidence voluntarily submitted by the accused,” said lawyer Baek So-yun of the GongGam Human Rights Law Foundation during the forum. She suggested allowing an exception for warrants to the Criminal Act when there is significant reason to suspect that the accused has committed a serious felony and there is a need for the immediate seizure of servers, hard drives or other storage devices.

Baek said that the current situation could lead to the victim of illegally filmed sexual content falling victim to a threat of or actual distribution of such content after reporting the case to the authorities. She said there needs to be a new clause in the Act on Special Cases Concerning the Punishment of Sexual Crimes that grants investigators the right to immediately order the deletion of sexually exploitive content upon securing the original files.

![[Hello India] Hyundai Motor vows to boost 'clean mobility' in India](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=644&simg=/content/image/2024/04/25/20240425050672_0.jpg&u=)