Smart speaker privacy concerns spread to Korea

Naver, Kakao and mobile carriers admit their AI speakers collect recordings of voice commands, but downplay privacy concerns, say collected voice data ‘deidentified’

By Yeo Jun-sukPublished : Sept. 9, 2019 - 18:07

With Google, Amazon and Apple in hot water for enabling their artificial intelligence speakers to collect recordings of users’ voice commands, similar incidents are now occurring in South Korea, where the country’s tech giants have been found gathering personal dialogue with their speakers.

Naver and Kakao admitted last week that their AI-based interfaces have been collecting users’ audio data and converting it to written files. Such processes were also found to have been conducted by KT and SK Telecom to enhance the mobile carriers’ AI speaker performance.

While the companies asserted they aimed to improve their AI systems’ performance and took measures to protect users’ privacy, advocates are worried that smart speakers can eavesdrop on intimate aspects of users’ personal lives.

The number of Koreans using smart speakers has been increasing. According to the 2018 data from KT’s market research institute Nasmedia, about 3 million people used AI speakers. That number is expected to increase to 8 million by this year’s end.

The first time Korean companies introduced AI-based voice command system was in 2016, when SKT unveiled its smart speaker Nugu. It was followed by KT’s GiGA Genie, which connects with internet protocol TV and other home devices. Naver’s Clova and Kakao’s Kakao Mini followed suit in 2017.

Until recently, the mobile carriers’ smart speakers have gained popularity among local consumers by connecting the AI devices to IPTV services. According to the 2018 research from Consumer Insight, KT and SKT’s market share reached 39 and 26 percent, respectively.

Meanwhile, Naver and Kakao have been increasing their appeal by connecting smart speakers with internet search engines and messenger services. Naver’s Korean market share in AI speakers was 16 percent as of last year, while Kakao claimed 12 percent, according to Consumer Insight.

Naver and Kakao admitted last week that their AI-based interfaces have been collecting users’ audio data and converting it to written files. Such processes were also found to have been conducted by KT and SK Telecom to enhance the mobile carriers’ AI speaker performance.

While the companies asserted they aimed to improve their AI systems’ performance and took measures to protect users’ privacy, advocates are worried that smart speakers can eavesdrop on intimate aspects of users’ personal lives.

The number of Koreans using smart speakers has been increasing. According to the 2018 data from KT’s market research institute Nasmedia, about 3 million people used AI speakers. That number is expected to increase to 8 million by this year’s end.

The first time Korean companies introduced AI-based voice command system was in 2016, when SKT unveiled its smart speaker Nugu. It was followed by KT’s GiGA Genie, which connects with internet protocol TV and other home devices. Naver’s Clova and Kakao’s Kakao Mini followed suit in 2017.

Until recently, the mobile carriers’ smart speakers have gained popularity among local consumers by connecting the AI devices to IPTV services. According to the 2018 research from Consumer Insight, KT and SKT’s market share reached 39 and 26 percent, respectively.

Meanwhile, Naver and Kakao have been increasing their appeal by connecting smart speakers with internet search engines and messenger services. Naver’s Korean market share in AI speakers was 16 percent as of last year, while Kakao claimed 12 percent, according to Consumer Insight.

Can they spy on us?

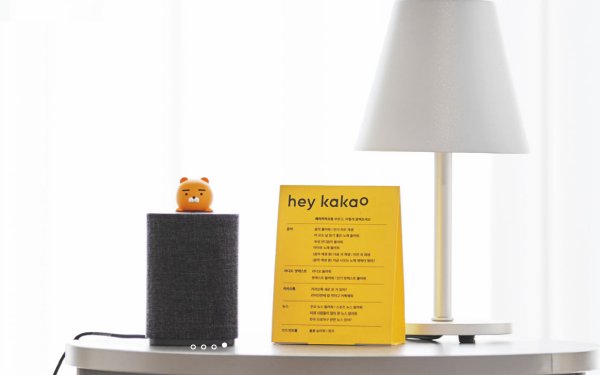

Local daily Hankook Ilbo reported on Sept. 2 that Naver had recorded what people said to its AI-based voice recognition service Clova. A similar allegation was subsequently made against Kakao’s AI interface Kakao Mini.

According to the reports, Naver and Kakao had enabled their smart speakers to collect recordings of people using voice commands. The recordings were subsequently transcribed by outside contractors working at affiliates.

The companies acknowledged that they operated teams engaged in the efforts, but that it was limited to the time when the AI speakers had been “summoned.” The companies also asserted that gathering such information was designed to improve performance.

“In order to accurately assess Clova’s performance and improve its AI service capability, we store data when users make a voice command,” Naver said in a statement released Sept. 3. “Unless it is called upon, Clova does not collect any dialogue data.”

According to the company, about 1 percent of users’ voice commands were recorded when they were trying to communicate with Clova AI system. Kakao said it randomly collected 0.2 percent of commands.

The recordings were anonymized then transcribed by human beings and this was subsequently compared to what AI devices had recognized. Afterward, the comparison analysis is sent back to all machines to improve performance on future tasks.

KT and SKT said they have been applying similar security measures to their smart speakers. According to the companies, only a small fraction of anonymized data was selected for voice recognition analysis by their contracted workers.

“Before our contracted workers analyzed data, we conducted voice modulation and delated personal data to prevent people from figuring out users’ identity,” an SKT official told The Korea Herald.

Despite the explanations, such activities have prompted privacy concerns among those using smart speakers, pointing to the mere possibility of the sensitive information being recorded, shared and even used against them.

“Now that I know what the AI speakers are capable of, I’m starting to worry about what my daughters say to AI speakers,” said Lee Yeon-soo, who owns several smart speakers placed in his home. “I should figure out ways to use them more safely and smartly.”

‘Deidentification’

Naver, Kakao and the mobile carriers say they take action to prevent transcribers from figuring out where the data has come from, so their voice collection activities should not constitute privacy violation.

Korea’s privacy protection law prevents companies from gathering personal information without prior consent from users. The government came up with relaxed guidelines in 2016, allowing for usage of personal information if it does not tell users’ identities.

According to the companies, the recorded voice commands go through a “deidentification” process before being sent to an outside contractor for human transcription. Sensitive personal information is removed during the process, the companies said.

“It is like removing identification tags from recorded voice commands. Analysts can’t tell which smart speakers sent voice recordings,” said an official from KT. “And the recordings are ‘encrypted’ with the deletion of personal information.”

The companies said they scrub the ID information from the recordings and check the text generated from the speakers’ voice recognition for information that could identify a user. Recordings containing names, addresses or schools, for example are not sent.

A Naver official told The Korea Herald that the listening activity was designed to pick out clear examples of words to optimize the voice recognition system, rather than monitoring commands or sentences.

“That data was even delivered in individual words, so the analysts can’t figure out whole sentences either,” he said.

“Our voice collection activities are designed to improve Clovas’ performance and make sure they work properly when being called upon. … We believe that similar work is underway by those developing AI speakers here and abroad.”

Kakao said while they do not apply deidentifcation process to every voice command collected by Kakao Mini, the company asserts that its measures for privacy protection could be stricter than its competitors.

According to Kakao, the deidentification process occurred when they conducted analysis of 0.2 percent of voice commands collected. It is designed to figure out more easily what kinds of personal data should be destroyed when Kakao users want to stop using voice recognition services.

“When Kakao users withdraw from our services, we destroy their personal data, such as voice commands, immediately,” a Kakao official said. “That is why we kept some identification tags in smart speakers to track down more easily which data we should scrub.”

The companies also stressed that their AI speakers’ voice collection activities comply with their users’ terms of service, which specify the companies can collect voice commands to improve the speakers’ performance.

Experts, meanwhile, pointed out that privacy issues in the era of big data will continue to become more sensitive than the level of their legitimacy.

“As long as there is no specific information that can tell who creates a voice command, it is hard to make the case for violation of privacy laws. … Those voice commands can be seen as a part of big data used by companies,” an attorney at a Seoul-based law firm said, requesting anonymity.

“However, if (Kakao or Naver) employees or any third-party people happen to catch something that the smart speaker users don’t want others to hear, there is always a possibility that it can infringe upon the people’s basic right for privacy.”

Some experts noted that Naver and Kakao should follow global tech giants’ footsteps, giving users a greater sense of control.

Amazon recently unveiled a privacy protection tool that can make it easier for Alexa users to delete records of their digital conversations. Through Alexa Privacy Hub, users can delete their voice recordings by changing the device’s privacy settings.

“If voice recordings were to be leaked, it could constitute a grave privacy violation. The companies need to take extra steps to manage such data,” said Sung Dong-kyu, a professor from ChungAng University of Media Communication department.

While Naver and Kakao pledged to introduce such features in their smart speakers, they have yet to announce an exact timeline. In a statement last week, Naver said it would become the first Korean company to adopt a privacy protection tool like Amazon.

(jasonyeo@heraldcorp.com)

![[Graphic News] More Koreans say they plan long-distance trips this year](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=644&simg=/content/image/2024/04/17/20240417050828_0.gif&u=)

![[KH Explains] Hyundai's full hybrid edge to pay off amid slow transition to pure EVs](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=644&simg=/content/image/2024/04/18/20240418050645_0.jpg&u=20240419100350)

![[From the Scene] Monks, Buddhists hail return of remains of Buddhas](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=652&simg=/content/image/2024/04/19/20240419050617_0.jpg&u=20240419175937)

![[KH Explains] Hyundai's full hybrid edge to pay off amid slow transition to pure EVs](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=652&simg=/content/image/2024/04/18/20240418050645_0.jpg&u=20240419100350)

![[Today’s K-pop] Illit drops debut single remix](http://res.heraldm.com/phpwas/restmb_idxmake.php?idx=642&simg=/content/image/2024/04/19/20240419050612_0.jpg&u=)